Read more of this story at Slashdot.

Ward Christensen, co-inventor of the computer bulletin board system (BBS), has died at age 78 in Rolling Meadows, Illinois. He was found deceased at his home on Friday after friends requested a wellness check. Christensen, along with Randy Suess, created the first BBS in Chicago in 1978, leading to an important cultural era of digital community-building that presaged much of our online world today.

In the 1980s and 1990s, BBSes introduced many home computer users to multiplayer online gaming, message boards, and online community building in an era before the Internet became widely available to people outside of science and academia. It also gave rise to the shareware gaming scene that led to companies like Epic Games today.

Friends and associates remember Christensen as humble and unassuming, a quiet innovator who never sought the spotlight for his groundbreaking work. Despite creating one of the foundational technologies of the digital age, Christensen maintained a low profile throughout his life, content with his long-standing career at IBM and showing no bitterness or sense of missed opportunity as the Internet age dawned.

After more than nine months in an unusual, highly elliptical orbit, the US military's X-37B spaceplane will soon begin dipping its wings into Earth's atmosphere to lower its altitude before eventually coming back to Earth for a runway landing, the Space Force said Thursday.

The aerobraking maneuvers will use a series of passes through the uppermost fringes of the atmosphere to gradually reduce its speed with aerodynamic drag while expending minimal fuel. In orbital mechanics, this reduction in velocity will bring the apogee, or high point, of the X-37B's orbit closer to Earth.

Bleeding energy

The Space Force called the aerobraking a "novel space maneuver" and said its purpose was to allow the X-37B to "safely dispose of its service module components in accordance with recognized standards for space debris mitigation."

Tesla has issued a recall for effectively every Cybertruck it’s delivered to customers due to a fault that’s causing the vehicle’s accelerator pedal to get stuck.

According to the National Highway Traffic Safety Administration (NHTSA) on Wednesday, the defect can result in the pedal pad dislodging and becoming trapped in the vehicle’s interior trim when “high force is applied.”

The fault was caused by an “unapproved change” that introduced “lubricant (soap)” during the assembly of the accelerator pedals, which reduced the retention of the pad, the recall notice states. The truck’s brakes will still function if the accelerator pedal becomes trapped, though this obviously isn’t an ideal workaround.

The recall impacts “all Model Year (‘MY’) 2024 Cybertruck vehicles manufactured from November 13, 2023, to April 4, 2024,” with the fault estimated to be present in 100 percent of the total 3,878 vehicles. This is essentially every Cybertruck delivered to customers since its launch event last year.

A recall seemed to be inevitable after Cybertruck customers were reportedly notified earlier this week that their deliveries were being delayed, with at least one owner being informed by their vehicle dealership that the truck was being recalled over its accelerator pedal. The issue was also highlighted by another Cybertruck owner on TikTok, showing how the fault “held the accelerator down 100 percent, full throttle.”

@el.chepito1985 serious problem with my Cybertruck and potential all Cybertrucks #tesla #cyberbeast #cybertruck #stopsale #recall

♬ original sound - el.chepito

The timeline reported in the NHTSA filing says that Tesla was first notified of the defective accelerator pedals on March 31st, followed by a second report on April 3rd. The company completed internal assessments to find the cause on April 12th before voluntarily issuing a recall. As of Monday this week, Tesla said it isn’t aware of any “collisions, injuries, or deaths” attributed to the pedal fault.

Tesla is notifying its stores and service centers of the issue “on or around” April 19th and has committed to replacing or reworking the pedals on recalled vehicles at no charge to Cybertruck owners. Any trucks produced from April 17th onward will also be equipped with a new accelerator pedal component and part number.

This is actually the second of Tesla’s many recalls to affect the Cybertruck, but it is the most significant. The company issued a recall for 2 million Tesla vehicles in the US back in February due to the font on the warning lights panel being too small to comply with safety standards, though this was resolved with a software update.

Tesla fans have taken issue with the word “recall” in the past when the company has proven adept at fixing its problems through over-the-air software updates. But they likely will have to admit that, in this case, the terminology applies.

Read more of this story at Slashdot.

Enlarge / Cinnamon (Photo by Hoberman Collection/Universal Images Group via Getty Images) (credit: Getty | Hoberman Collection)

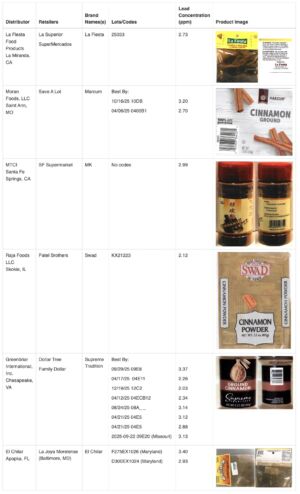

Six different ground cinnamon products sold at retailers including Save A Lot, Dollar Tree, and Family Dollar contain elevated levels of lead and should be recalled and thrown away immediately, the US Food and Drug Administration announced Wednesday.

The brands are La Fiesta, Marcum, MK, Swad, Supreme Tradition, and El Chilar, and the products are sold in plastic spice bottles or in bags at various retailers. The FDA has contacted the manufacturers to urge them to issue voluntary recalls, though it has not been able to reach one of the firms, MTCI, which distributes the MK-branded cinnamon.

The announcement comes amid a nationwide outbreak of lead poisoning in young children linked to cinnamon applesauce pouches contaminated with lead and chromium. In that case, it's believed that a spice grinder in Ecuador intentionally added extreme levels of lead chromate to cinnamon imported from Sri Lanka, likely to improve its weight and/or appearance. Food manufacturer Austrofoods then added the heavily contaminated cinnamon, without any testing, to cinnamon applesauce pouches marketed to toddlers and young children across the US. In the latest update, the Centers for Disease Control and Prevention has identified 468 cases of lead poisoning that have been linked to the cinnamon applesauce pouches. The cases span 44 states and are mostly in very young children.